The Abuja Call for Action identifies as the first priority the need for countries to understand the scale of the literacy challenge. The exam ple of Kenya, which was shared with participants in Abuja, provides an excellent way of operationalising this. The author is Director of the Department of Adult Education in Kenya.

The Kenya National Adult Literacy Survey (KNALS) was conducted between June and August 2006 by the Kenya National Bureau of Statistics (KNBS) in collaboration with the Department of Adult Edu cation, UNESCO Nairobi Office and other key partners. The purpose was to generate accurate data on the status of literacy in the country. The specific objectives were to:

Kenya was among five developing countries selected to pilot the Lit eracy Assessment and Monitoring Programme (LAMP) developed by the UNESCO Institute for Statistics. Kenya needed the data urgently to expand and strengthen its national literacy programme and, after participating in the programme for two years, the country realised that the LAMP developmental phase was taking too long. The LAMP meth odology was also very expensive. According to the advice received each language would have required a sample of 2,250. Kenya was also uncomfortable with the LAMP assessment items. A critical analy sis of the 12 filter module assessment questions adopted by LAMP from IALS revealed that 90 % of these items would not be culturally relevant to the Kenyan situation. Kenya therefore decided to adopt some of the methodologies learnt from LAMP and other approaches used in the Southern and Eastern Africa Consortium for Monitoring Educational Quality (SACMEQ), to develop assessment items tailored specifically to the Kenyan culture and context. We are however greatly indebted to LAMP for the training received in developing the literacy assessment items.

The sample for the KNALS covered all eight Kenyan provinces; Cen tral, Coast, Eastern, Nairobi, North Eastern, Nyanza, Rift Valley and Western. A probability sample of about 18,000 households was se lected to allow for separate estimates for key indicators for each of the provinces and districts in the country and for urban and rural areas. The survey used a two-stage sample design. The first stage involved selecting clusters from the national master sample maintained by the KNBS. A total of 1,200 clusters comprising 377 urban and 823 rural were selected from this master frame. The second stage involved the systematic sampling of households from a list of all households. Eighteen households were sampled from each of the clusters. The household listing was updated in 2005 while preparing for the Kenya Integrated and Household Budget Survey (KIHBS). KNBS experts in Nairobi did the selection of clusters and households for the survey and the sample lists were given to survey supervisors. All members of the household selected aged 15 years and above were eligible for inclu sion in the literacy survey. However, only one eligible member from each household was randomly selected to complete the individual questionnaire and test items.

Four literacy assessment instruments were developed in consultation with a broad spectrum of stakeholders including the University of Nairobi, Ministry of Education, Kenya National Examinations Council, faith-based organisations, civil-society groups and community-based groups to ensure that they were culturally relevant.

There are 42 different ethnic groups in Kenya and 18 major language groups. Since the government's language of instruction policy is to use indigenous languages at the basic literacy level and introduce Kiswahili and English at the post-literacy level, the survey was conducted in the main language groups with English and Kiswahili to accommodate the prevailing cultural and linguistic diversity. Two translators for every language/dialect were engaged to translate the test items. Each translation was followed by a reverse translation; a common procedure used to make sure that a source text is understandable and to trace any inaccuracies or ambiguities.

A household questionnaire was used during the survey to list all members in the selected households. This collected information relating to gender, age, marital status, religion, tribe, school/centre attendance, educational attainment, disability and employment for all household members aged five years and above. Based on this basic information, all eligible members of the household were identified and one selected randomly to complete the individual questionnaire and assessment.

The individual questionnaire collected the following information:

An institutional questionnaire, administered to sampled adult edu cation centres, collected information on issues relating to the provision of adult education. The questions covered the following aspects:

As well as collecting information on self-assessment of literacy levels, the KNALS also included a literacy assessment test for all selected respondents. This provided information such as whether the re spondents could read and understand instructions or read and make use of the information provided. Unlike past literacy surveys where respondents who had attended school up to a particular level were assumed to be literate, all respondents were subjected to the same test. The KNALS thus measured literacy through direct assessment of men and women aged 15 years and above which focused on three skills: reading, writing and numeracy.

A Steering Committee composed of permanent secretaries (Ministry of Planning and Ministry of Gender, Sports, Culture and Social Serv ices) and the directors of relevant departments and representatives of development partners, provided policy guidance and resource mobilisation. A Technical Committee comprising representatives of the KNBS, DAE, UNESCO, Ministry of Education, KNEC, University of Nairobi and KIE managed and implemented the survey. Coordina tors at national and regional levels were responsible for ensuring the implementation of the survey in their respective areas. Twenty-six field-supervisors were in charge of data collection teams, assigning work, liaising with the District Statistical Officers and the District Adult Education Officers, while 99 research assistants were expected to identify the selected households, undertake household and individual interviews and administer, mark, score and record the results of the literacy assessment tests.

The field supervisors and research assistants took part in a week long training course. Topics included the background and objectives of the survey, key concepts and definitions, use of cluster maps, role of research assistants, interviewing techniques, completion of questionnaires and summary forms, field procedures and all aspects of undertaking a survey. The trainees were then taken through all the questionnaires looking at the contents of each item to be tested. Practical exercises were also undertaken in and outside training ses sions. Mock interviews and field tests were also conducted during the period of training to ensure that quality data was collected from the field. Trainers were drawn from KNBS, DAE, Ministry of Educa tion and Ministry of Gender, Sports Culture and Social Services (MOGSCSS) Headquarters, the KNEC and the University of Nairobi. Supervisors were drawn from experienced officers in both the DAE and KNBS.

A total of 26 interviewing teams carried out the data collection during the main survey. Each team was composed of one supervisor, up to four research assistants and a driver. Data was collected over a two month period from June 8 to August 8, 2006. The supervisors liaised with the district statistical officers to get access to cluster files and with district adult education officers to identify and fill in the institu tional questionnaires, edit all questionnaires and moderate the tests through remarking a sample of the literacy assessment instruments to ensure quality.

Complete field-edited questionnaires were sent to KNBS offices in Nairobi for data capture and further editing. Data processing consist ed of re-editing, recoding particularly the labour module, data entry, verification and data cleaning. After cleaning, the data was weighed to conform to the known population parameters. A team of 14 data entry clerks and two supervisors was engaged for 60 days. The following data processing programmes were used: CSPro (Census and Survey Processing System), SPSS (Statistical Package for the Social Sciences) and RUMM software for analysing assessment and attitude questionnaire data, based on Rasch analysis technique.

The following were some of the key findings of the KNALS:

In general, the survey showed that the country had a national adult literacy rate of 61.5 %% and a numeracy rate of 64.5 %, indicating that more people were knowledgeable in computation than reading. The critical finding was that on average 38.5 % (7.8 million) of the Kenyan adult population was illiterate, which is a major challenge, given the central role literacy plays in national development and the empower ment of individuals to lead a fulfilling life. Another critical finding was that the age cohort 15 to 19 years recorded a literacy rate of 69.1 %. This implies that within this age group 29.9 % are illiterate.

The survey revealed that women performed worse in reading and numeracy than men, at 64.2 % and 67.9 % and 58.9 % and 61.4 % respectively. Perhaps because of this, more females participate in adult literacy programmes than men.

The survey also showed variations in literacy levels by regions. The urban areas recorded higher rates than rural areas. For example, Nairobi, the capital city, had an adult literacy rate of 87.1 % while North Eastern Province had an adult literacy of 9.1 %. The regional disparities confirm the trend where areas that are economically well off have a head start in terms of academic achievements compared to poor areas.

Although the national literacy rate was estimated at 61.5 % indicating that 38.5 % were illiterate, the survey also revealed that only 29.6 % of the adult population had acquired the desired mastery level of literacy. This means that the majority of those people measured as literate (61.5 %) are at risk of losing their literacy skills or cannot effectively perform within the context of knowledge economies.

Girls Forum Peleleza Primary School in Likoni, Kenya, Source: ActionAid

The survey revealed that monitoring and evaluation of ACE pro grammes was inadequate because the supervisors were ill-equipped to reach all the learning centres. It also revealed that learning centres lacked adequate and relevant teaching and learning materials. Most of the learning venues were community-owned (schools, churches, mosques, community halls, etc.) and the furniture in most of these venues was inappropriate for adult learners and learning. The cat egories of self-help and part-time teachers were found to be lacking adequate skills for delivery in adult classes.

Although there are teachers fully paid by the government, the major ity of the teachers working in ACE programmes are self-help and part-time teachers who are paid a token for volunteering to teach adults. Even those working in classes run by non-state actors are not well remunerated. This scenario helps to explain the low calibre of facilitators being attracted to the ACE profession and the low morale exposed by the survey.

The study revealed that only 31 % of the Kenyan adult population was aware of the existence of the ACE programmes. The Department of Adult Education has at least one centre in every administrative loca tion but because of the size of these areas, not everyone would be aware of the centre.

The survey report was launched in March 2007 in Nairobi. The partici pants were drawn from government, faith-based organisations, civil society organisations and development partners. The findings have also been disseminated at regional meetings in Nyanza, Rift Valley, Coast, and Eastern provinces and plans are underway to disseminate the report in the rest of the provinces and districts. There is a need to do further dissemination at the grassroots level so that the popu lation can understand their literacy position and design appropriate actions to tackle the low literacy levels. Recognising the importance of these findings now that Kenya has a new team of political players, there are plans also to disseminate this report to the new members of parliament. The Department is also using the results of the survey to lobby the Government for more funding.

The Government of Kenya is grateful for the financial support received from the Canadian International Development Agency (CIDA), Depart ment for International Development (DFID) and the German Adult Education Association. The Department is also grateful to Dr. Njora Hungi of the Southern and Eastern Africa Consortium for Monitoring Educational Quality (SACMEQ), UNESCO – International Institute for Education Planning (IIEP), and Dr. Susan Nkinyangi of UNESCO for the technical support provided during the survey.

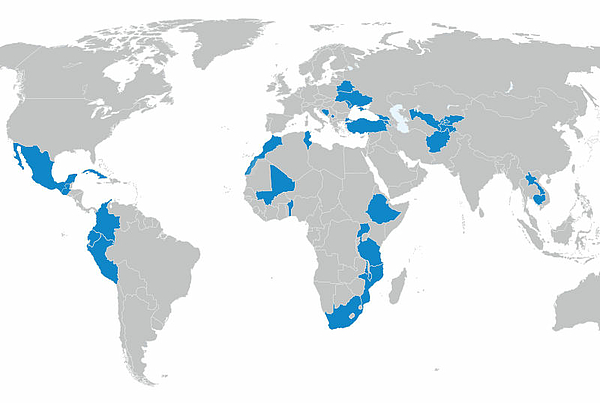

DVV International operates worldwide with more than 200 partners in over 30 countries.

To interactive world map